I started writing code when I was ten. Eight years later, the thing I grew up doing is changing faster than at any point since I began. This is how I see it.

Before it had a name

I joined Cursor about 700 days ago, back when it was a small startup that had raised an $8M seed round and had less than 40,000 users. GPT-4 was the best model available and Claude 3 Opus had just come out. Today that same company is worth $29.3 billion.

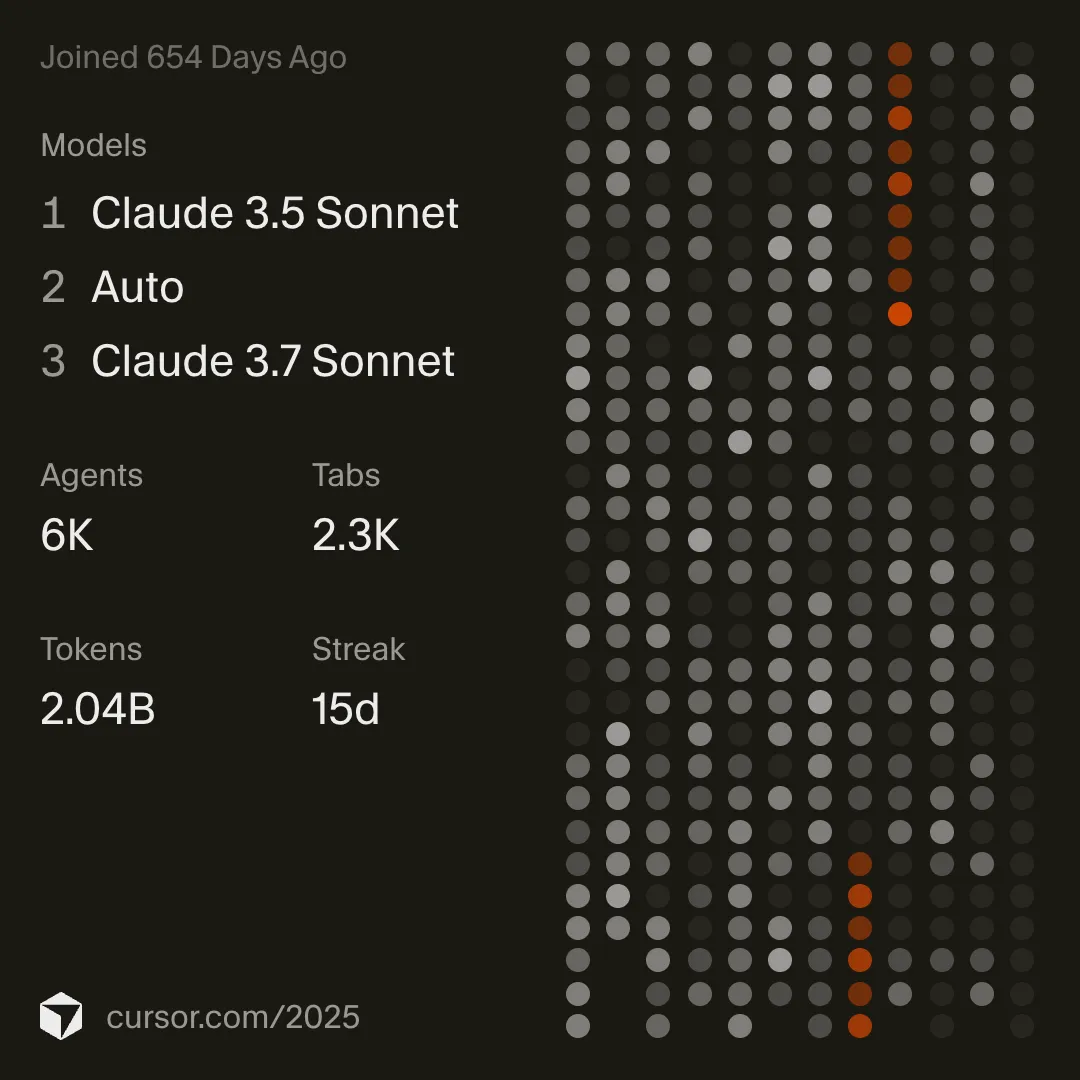

My Cursor year-end stats from December 2025. When I first opened this app, none of these models existed yet.

My Cursor year-end stats from December 2025. When I first opened this app, none of these models existed yet.

My OpenAI account goes back even further. I created it around April 2022, seven months before ChatGPT launched. I was fifteen and messing around with GPT-3 in the playground because I’d read somewhere that it could write code for you.

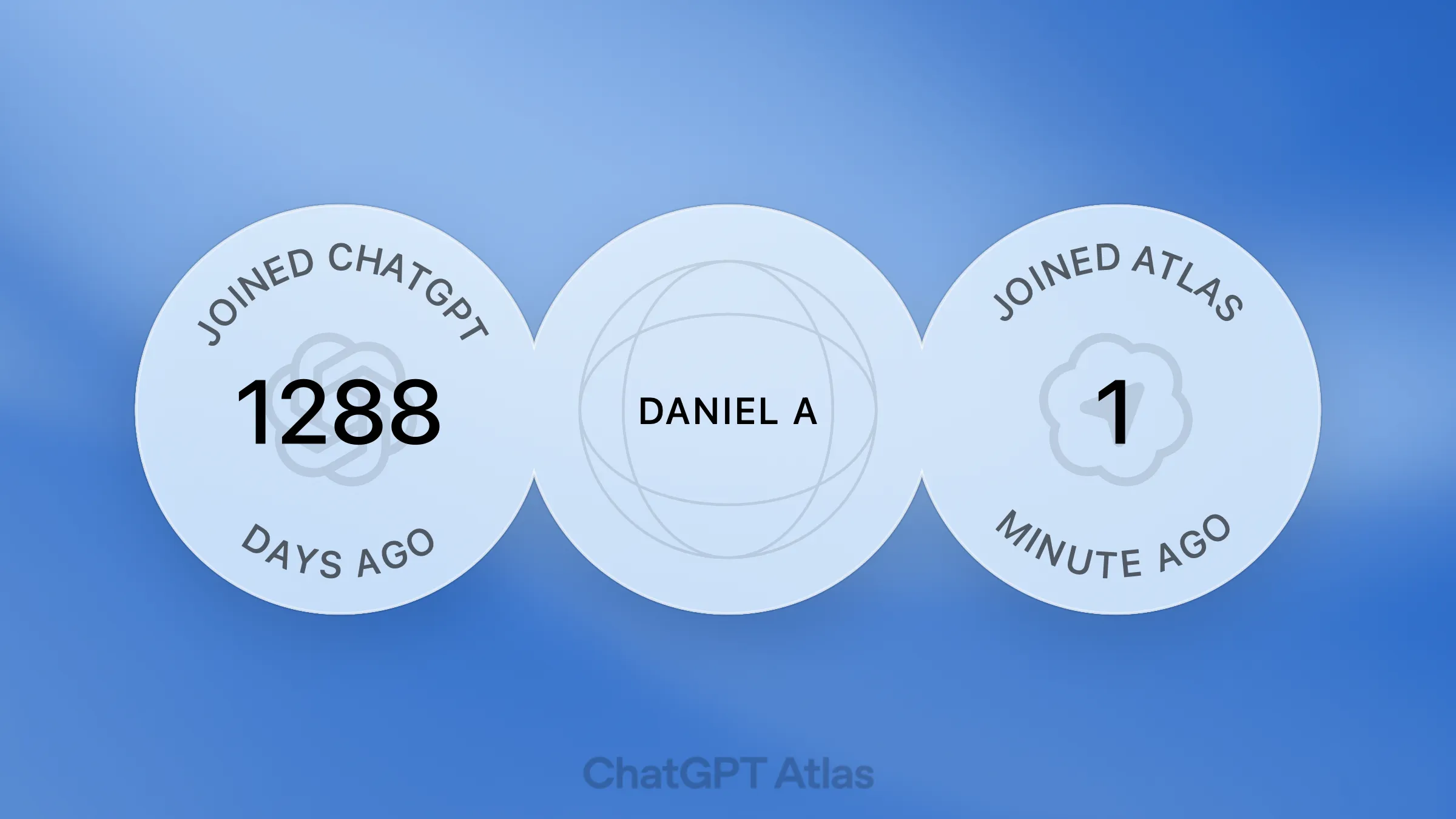

My OpenAI account age as of October 2025, created months before ChatGPT even existed as a product.

My OpenAI account age as of October 2025, created months before ChatGPT even existed as a product.

My “Year with ChatGPT” stats from December 2025. The 11.78K em-dashes was my biggest gripe about the tool.

My “Year with ChatGPT” stats from December 2025. The 11.78K em-dashes was my biggest gripe about the tool.

Andrej Karpathy coined “vibe coding” in early 2025. By the time the discourse started, I’d been doing this for over a year already.

The problem with vibe coding tools

Tools like v0, Bolt, and Lovable produce slop. I’ve tried all of them and I’ve never used an output from any of them in a project I cared about. They’re fine for internal dashboards and throwaway prototypes, but the moment someone tries to build a real product with them, things fall apart in ways that are ugly and dangerous.

A friend of mine vibe-coded a basketball tournament system where people could sign up, form teams, pay into prize pools, and compete for winnings.

This is what happens when non-programmers put production software in front of real users with AI. The surface looks fine, and everything underneath is broken. My friend’s app is a small-scale example, but imagine the same negligence applied to a fintech product or a healthcare system.

AI is brilliant if you know what you’re doing

AI-assisted coding is the biggest productivity gain in the history of programming, and I rarely write code by hand anymore. The only times I do are when I’m doing it for fun or when I’m sitting exams at school where they still make us write code on paper.

DHH said something on Lex Fridman’s podcast that stuck with me - “The joy is to command the guitar yourself. The joy of a programmer, of me as a programmer, is to type the code myself.” I feel that. I grew up with programming and I still enjoy doing it myself. But for real work, I use AI constantly.

The difference is that I understand what it generates. When Claude writes a React component I can read it and tell what’s wrong. When it scaffolds a database schema I know if something’s missing. I know what to look for because I’ve written enough of it by hand. Take that experience out of the loop and you get my friend’s basketball app.

What actually matters

The “AI can only do boilerplate” take is outdated. Karpathy recently left an agent running for two days doing autonomous ML research, and it found twenty real improvements that transferred to larger models. But what gets lost in stories like that is why it worked for him - because he has two decades of experience in that exact domain, and he certainly didn’t copy a prompt off LinkedIn to get there.

An LLM will go back and forth with anyone. Someone with no background can tell it to build them an app and they’ll get something back. The tool doesn’t gatekeep. But the output you accept is only as good as your ability to evaluate it. Someone who’s been writing code for years will catch problems that someone who hasn’t will walk right past. AI didn’t close the knowledge gap - it made it invisible.

Language models also have real limitations that are worth understanding. They hallucinate and pattern-match against training data, degrading unpredictably on novel problems in what Karpathy calls jagged intelligence. Every major lab is still working on this, and AGI remains further out than the marketing suggests. A study by METR found developers using AI felt twenty percent more productive but were actually nineteen percent slower. The non-technical founders vibe-coding their startups are a version of this at scale - building on foundations they can’t inspect, accumulating technical debt at the speed of generation with nobody who can pay it down.

What actually matters is knowing what you’re building and being able to tell whether what you got back is correct. That separates useful output from dangerous output, and no amount of tooling has changed that.

What worries me

The bar is dropping for how good software is, beyond just whether it runs. The details that make you want to keep using something disappear when the person building it doesn’t have the experience to know what good looks like. There’s a massive difference between “it works” and “I want to keep using this,” and vibe-coded products almost never cross that line.

There’s also a commoditization problem that I don’t think enough people are thinking about. If your product is vibe-coded, what’s stopping me from vibe-coding my own version in a day? People already do this. The moat for software used to be that it was hard to build, and that’s gone. The new moat is craft. If you don’t deeply understand what your user needs, you have a template that anyone can reproduce.

I also think about people starting to code right now in 2026. I spent years writing bad code and figuring out why it was bad. I got lucky with my timing - I had eight years of that before AI made it optional. I don’t know what it looks like for someone who starts today and never has to go through that. The understanding has to come from somewhere.

Where this is going

The leverage that AI gives a single programmer is unlike anything that’s existed before, and I already feel it in my own work. But I think the thing that’s going to define the next decade is that the people who understand what’s underneath these tools will get dramatically more out of them than the people who don’t. Both groups will build software, and only one group will know what to do when something goes wrong. I’ve been at this long enough to know that the years I spent learning how things actually work are the reason I can get so much out of these tools today, and I don’t think that changes anytime soon.